The Financialization of AI Is Happening in Real Time

Artificial intelligence is the dominant technological narrative of this decade. Capital is flowing into foundation models, data centers, GPU manufacturers, and enterprise AI tooling at historic scale. Crypto, as it has done with every major macro narrative, is attempting to financialize that momentum.

In 2020 it was DeFi.

In 2021 it was NFTs.

Today, it is AI tokens.

Billions in market capitalization have rotated into projects positioning themselves at the intersection of blockchain and machine intelligence. But beneath the surface, these assets fall into two very different categories:

- Decentralized AI infrastructure

- Speculative AI narrative tokens

The critical question for investors and builders is straightforward:

Are these networks actually building defensible AI infrastructure, or are we witnessing the early stages of another reflexive speculative cycle?

To answer that, we need to separate substance from velocity.

Category One: Decentralized AI Infrastructure

The strongest argument for AI tokens rests on a structural premise: artificial intelligence requires compute, coordination, and incentives. Crypto is uniquely designed to coordinate distributed participants using tokenized rewards.

Two leading examples illustrate this infrastructure thesis.

Bittensor (TAO) – A Market for Intelligence

Bittensor proposes a decentralized marketplace for machine intelligence. Rather than a single centralized lab training models behind closed APIs, it incentivizes participants to contribute machine learning outputs to specialized subnets. These subnets are evaluated and ranked by peers, and rewards are distributed in TAO based on perceived utility.

Conceptually, this is ambitious. It attempts to create a permissionless intelligence network where:

- Contributors supply model outputs

- Validators assess quality

- Incentives align performance with token emission

The upside narrative is enormous: a decentralized alternative to centralized AI labs. If intelligence becomes modular and composable, a token-incentivized marketplace could theoretically coordinate global contributors.

But complexity is both a strength and a risk.

Key structural questions remain:

- Is demand for subnet outputs organic or subsidized by emissions?

- Does the token accrue value from real usage or primarily from scarcity?

- Can decentralized evaluation meaningfully compete with vertically integrated AI companies?

Bittensor represents the purest form of the decentralized intelligence thesis. It is infrastructure-first, but it also relies heavily on incentive design and token economics.

Render (RNDR) – GPU Compute as a Marketplace

Where Bittensor focuses on intelligence output, Render focuses on compute supply.

The protocol connects users who need GPU rendering power with distributed providers willing to supply it. Originally focused on rendering workloads, it now increasingly benefits from broader GPU scarcity driven by AI demand.

Unlike abstract “AGI narratives,” this is tangible infrastructure:

- Compute supply

- Marketplace coordination

- Service delivery

The value proposition is clearer. AI workloads require GPU cycles. If centralized providers are constrained or expensive, decentralized marketplaces could offer flexible alternatives.

However, even here, valuation depends on real throughput:

- Are jobs flowing consistently?

- Does token demand scale with usage?

- Is the network competitive with centralized cloud providers?

In relative terms, Render sits closer to measurable infrastructure than most AI-labeled tokens. But the key remains fee generation versus speculative positioning.

Category Two: Tokenized AI Agents and Narrative Velocity

The second category is structurally different. It is not about infrastructure. It is about narrative, engagement, and financial velocity.

This is where things become reflexive.

Virtuals Protocol – Tokenized AI Personalities

Virtuals Protocol sits at the intersection of AI agents and onchain financialization. The premise is simple but powerful: AI-driven digital personalities can be tokenized, monetized, and speculated on.

Instead of investing in infrastructure, users invest in:

- AI agents

- Social engagement

- Tokenized digital identities

This shifts the thesis from compute markets to attention markets.

In practice, the value of such systems depends less on raw AI capability and more on:

- User engagement

- Viral dynamics

- Trading activity

Revenue may exist, but price action often reflects narrative acceleration rather than discounted cash flows.

The risk profile resembles prior cycles where cultural momentum drove token appreciation faster than sustainable business models could develop.

Venice Token (VVV) – Early-Stage AI Infrastructure Narrative

VVV represents a more nascent version of the AI infrastructure theme. Positioned within the broader AI token sector, it captures speculative interest around emerging AI-enabled ecosystems.

In early-stage projects, the gap between narrative and traction is widest.

Investors must evaluate:

- Is there product-market fit?

- Are users paying for services?

- Does the token serve a necessary economic function?

Without clear value capture, tokens risk becoming high-beta expressions of the broader AI narrative rather than durable assets.

Pump.fun – Infrastructure for Speculation

While not an AI protocol itself, Pump.fun is a critical piece of the AI token meta. It lowers the barrier to launching and trading tokens, accelerating narrative velocity.

When AI becomes the dominant story, speculative infrastructure amplifies it.

This dynamic reveals something fundamental:

Crypto excels at monetizing attention faster than traditional markets.

That doesn’t invalidate AI tokens — but it does mean speculation can outrun substance.

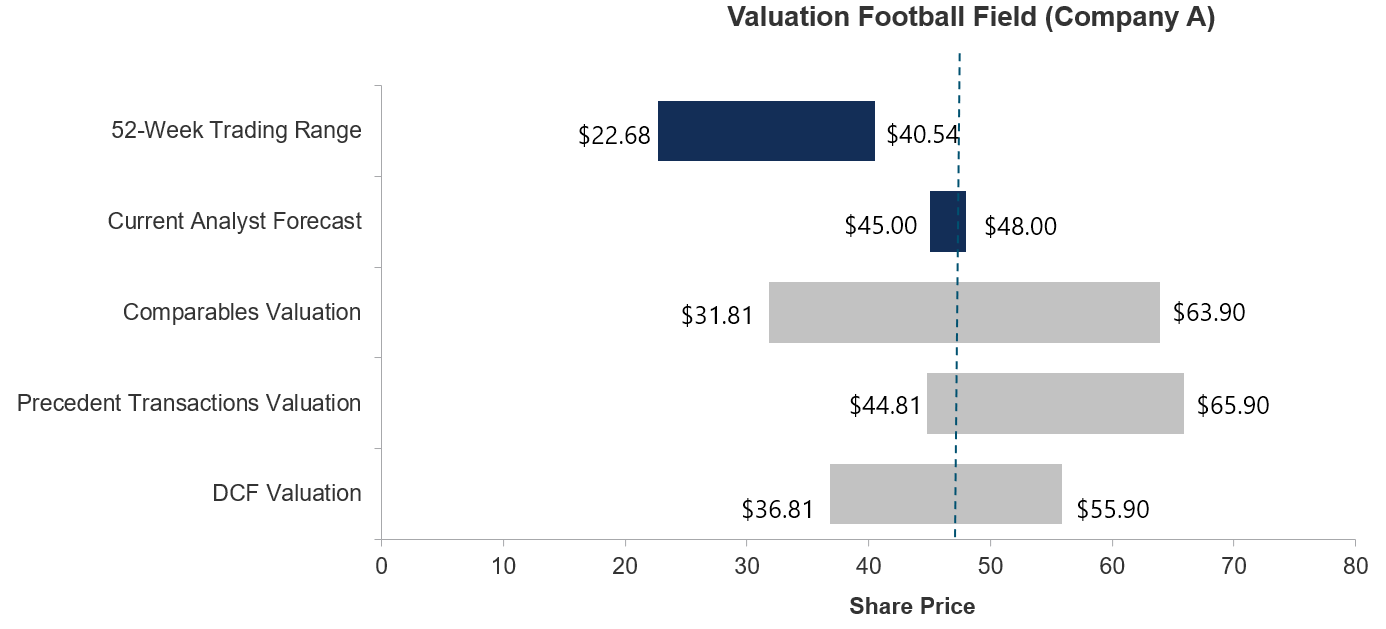

The Valuation Framework: Separating Revenue from Reflexivity

To determine whether AI tokens are infrastructure plays or speculative cycles, a disciplined framework is required.

1. Revenue Generation

Are protocols generating real fees?

- Compute usage fees

- Intelligence marketplace payments

- Platform commissions

If revenue is negligible relative to valuation, reflexivity dominates fundamentals.

2. Token Value Capture

Even if revenue exists, does the token capture it?

Key mechanisms include:

- Fee burns

- Staking rewards

- Required utility for network access

If revenue accrues to users but not token holders, long-term price appreciation becomes tenuous.

3. Competitive Moat

Can decentralized AI networks compete with centralized giants?

Centralized AI labs benefit from:

- Capital concentration

- Proprietary datasets

- Hardware vertical integration

Decentralized networks must compete on coordination efficiency and incentive alignment. That is non-trivial.

4. Emissions Pressure

High token emissions distort valuation.

If rewards exceed organic demand, price support depends on constant inflows. That is the classic setup for boom-bust cycles.

Historical Parallels: DeFi, NFTs, and the Pattern of Overextension

Crypto cycles follow a familiar pattern:

- A real technological innovation emerges

- Tokens financialize it

- Capital floods in

- Valuations overshoot

- Survivors consolidate

In DeFi Summer, decentralized exchanges and lending markets were real breakthroughs. Yet hundreds of yield farms vanished.

With NFTs, digital ownership infrastructure persists, but speculative profile-picture projects collapsed.

AI tokens may follow a similar trajectory.

The infrastructure thesis is credible. Distributed compute markets and intelligence coordination are legitimate problems. But the number of tokens capturing this narrative likely exceeds the number that will survive.

The Bull Case: Why AI Tokens Could Endure

Despite the risks, dismissing AI tokens entirely would be simplistic.

Several structural tailwinds support the sector:

- Explosive AI compute demand

- GPU scarcity

- Growing interest in decentralized alternatives

- Crypto-native monetization primitives

If decentralized networks can offer:

- Lower-cost compute

- Open intelligence markets

- Permissionless participation

They could occupy niches centralized providers ignore.

Furthermore, crypto enables micro-incentives at scale. That coordination layer may prove powerful in training, fine-tuning, or distributing models.

The strongest candidates will likely be those with:

- Clear product utility

- Measurable network usage

- Sustainable token economics

The Bear Case: Narrative Beta Without Durable Cash Flow

The counterargument is equally strong.

AI is capital-intensive. Training frontier models requires billions in hardware and energy expenditure. Centralized entities with access to venture capital and sovereign funding may maintain structural advantages.

Decentralized systems face coordination inefficiencies:

- Latency

- Quality control

- Governance friction

If token prices are primarily driven by correlation with AI headlines rather than network throughput, valuation becomes sentiment-dependent.

That dynamic can persist in bull markets — but it rarely survives prolonged downturns.

Three Likely Outcomes

Looking forward, three scenarios appear plausible:

1. Most AI Tokens Fail

As in prior cycles, 80–90% of projects may fade once speculative intensity declines.

2. A Small Infrastructure Core Survives

Protocols that deliver real compute or intelligence markets could consolidate market share and mature into durable networks.

3. Tokenized AI Agents Become a New Primitive

Speculative AI personalities and onchain agents may persist as a hybrid of entertainment, finance, and machine interaction — not as infrastructure, but as crypto-native products.

Conclusion: Funding the Future or Fueling the Cycle?

AI tokens sit at a fascinating intersection. They are both:

- A legitimate attempt to decentralize intelligence and compute

- A high-velocity vehicle for narrative speculation

Crypto does not build foundational AI research at the frontier. What it does exceptionally well is financialize trends, coordinate incentives, and bootstrap networks.

The determining variable will not be hype. It will be usage.

Protocols that generate sustained demand, align token value with network growth, and manage emissions responsibly stand a chance of long-term durability.

Everything else is leverage on a headline.

The opportunity in AI tokens is real — but so is the risk of overextension. As always in crypto, separating infrastructure from reflexivity is the difference between investing in the future and financing a cycle.